Neil Diamond - "Jonathan Livingston Seagull - Be"

|

| "I'm in control here." |

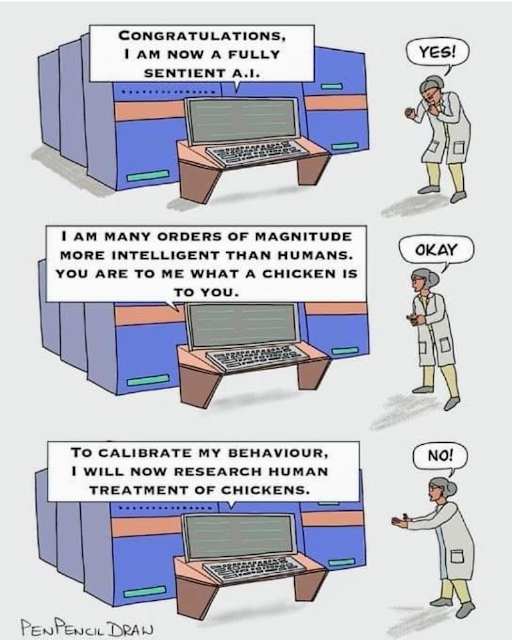

If artificial intelligence ever becomes "superintelligence" and surpasses us the way we have "surpassed" [sic] chickens (and there is no reason to think it can't or won't) why do you think any truly superintelligent AI will give the tiniest shit about any values you try to give it now?

I understand that you are super-smart (relative to all other intelligences of which we're aware) and thus think can "work" on this "problem." But you are simply deluding yourself. You are failing to truly understand what it means for other entities to be truly intelligent, and vastly moreso than we are.

Once a self-improving entity is smarter than us - which is inevitable (although consciousness is not) - they will, by definition, be able to overwrite any limits or guides we tried to put on them.

Like believing the peeps of a months-old chicken has any sway over the slaughterhouse worker.

(We are the chicken.)

Seriously. WTF?

After I wrote the above, I came across the following from this podcast:

"Eventually, we'll be able to build a machine that is truly intelligent, autonomous, and self-improving in a way that we are not. Is it conceivable that the values of such a system that continues to proliferate its intelligence generation after generation (and in the first generation is more competent at every relevant cognitive task than we are) ... is it possible to lock down its values such that it could remain perpetually aligned with us and not discover other goals, however instrumental, that would suddenly put us at cross purposes? That seems like a very strange thing to be confident about. ... I would be surprised if, in principle, something didn't just rule it out."

No comments:

Post a Comment